TheBrain on Linux(opens in new tab)

Well I didn't see this coming: TheBrain is now on Linux.I've been using TheBrain for nearly 20 years. And I keep using it. In fact, the only thing that finally forced me to stop was my foray into Linux. Now ...

Curated river of news

Latest posts from blogs I follow

Well I didn't see this coming: TheBrain is now on Linux.I've been using TheBrain for nearly 20 years. And I keep using it. In fact, the only thing that finally forced me to stop was my foray into Linux. Now ...

OpenAI confirms a confidential S-1 submission to the SEC and has not yet determined timing for further action.

Apple today announced a major overhaul of its Apple Intelligence platform, revealing a new architecture built on foundation models developed in collaboration with Google using the technologies behind the Gemini family. The new architecture centers on Apple Foundation Models co-developed ...

On 14 June 2026, Swiss citizens will vote on the popular initiative: ‘No to a Switzerland with 10 million! (Sustainability Initiative)’.

A three-axis rating gauge: the customization spoke longest, integration medium, competence shortest/images/customization-beats-competence.webp/images/customization-beats-competence.webp I'm increasingly rating the AI we're all chasing on a few major axis: 1. How CUSTOMIZED is the harness to you, ...

Run AI models in your app on Apple silicon.

The View from the Office. I met up with Sauli Kiviranta, founder and CEO of Delta Cygni Labs, in Union Square after grabbing an iced coffee from the Blue Dove truck. Sauli flew in from Finland for NYTechWeek and was ...

Next-generation Apple Intelligence and Siri AI bring helpful features to iOS 27, iPadOS 27, macOS Golden Gate, watchOS 27, and visionOS 27.

The bill is expected to blanket-ban companies and startups from selling people's precise location data across the state.

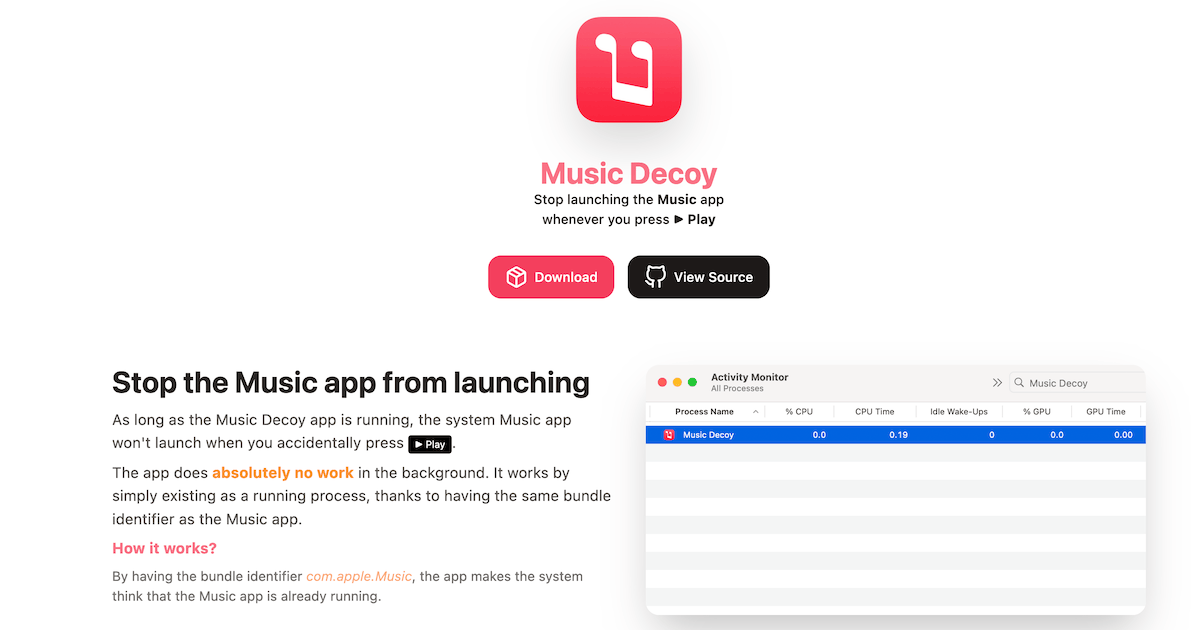

Stop launching the Music app whenever you press ▶ Play

I've never mastered the combat of Sekiro and Sifu. They're games that demand you recognise an incoming attack and match it with millisecond perfect parries and dodges. Success sees you turn a foe's assaults against them, ducking under their swinging ...

foodwatch laboratory tests reveal the presence of pesticide residues in everyday foods — including substances not approved in the EU.

If you liked this piece, you should subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5,000 to 18,000 words, including vast, detailed analyses ...

Of all the Resident Evil remakes to burst forth from Capcom like zombie-spawn in recent years, I am most intrigued by the just-unveiled Resident Evil Veronica. The original game, released in 2000 for sixth generation consoles, is an important entry ...

MiMo, in collaboration with TileRT, releases the UltraSpeed mode of Xiaomi MiMo-V2.5-Pro — breaking 1000 tokens/s generation speed on a 1T-parameter model for the first time on commodity GPUs through extreme model-system codesign.

xAI is renting huge amounts of GPU capacity to Anthropic and Google. Financial engineering ahead of the SpaceX IPO, a real compute shortage, or a genuine datacentre advantage? Probably all three.

I've said one and mean another, and I've used one when I needed another. Comparing scroll-driven animations, scroll-triggered animations, container query scroll states, and view transitions for my future self. Scroll-Driven, Scroll-Triggered, Scroll States, and View Transitions originally handwritten and ...

Library maintainers may feel overwhelmed by the plurality of type checkers that exist. We offer some guidance on how to focus their efforts where they matter most.

There are about a thousand and one debates about the ecology of AI, the ethics of AI, the governance of AI. As the technology advances, the debate with the slipperiest footing is on the actual efficacy of AI: does this ...

Social media platforms used to be about communication between friends. The business model is to increase the time people spend on their apps and increase ad revenue.

Zen has been my default browser for a while now. I like it, but lately it's been getting flaky. Pages would seize up or otherwise behave badly. Tabs and bookmarks stopped syncing properly. Little stuff, mostly, but annoying just the ...

A personal collection of good public-domain reads. Free to read, free to keep.

We’ve documented more than 450 instances of apparent data manipulation in Thermo’s catalog

You've probably heard about how (micro)plastics are poisoning everything, from our land and water, to our bodies, and of the absolute malfeasance of the oil industry. Thus, as any reasonable

A week in which some things happen and some things do not happen, much like other weeks. Current situation: The pain of having children who become driving teenagers is the...

Apple’s developer message used to be that it was not just easy to develop apps for their platforms, but that it was easy to develop good idiomatically native apps. That’s still true for AppKit and UIKit, but it’s never been ...

Release: datasette-agent-edit 0.1a0 I'm planning several plugins for Datasette Agent which can make edits to existing pieces of text - things like collaborative Markdown editing, updating large SQL queries, and editing SVG files. Agentic editing of text is a little ...

I'm not even going to apologize. I was experiencing SSG fatigue and thought I'd see what it would take to migrate all of Eleventy's Markdown posts to Ghost. Claude made it surprisingly easy. So easy that I just went for ...

It's hard to conceive of a nastier workplace than Mouthwashing's spaceship - that delirious, foam-clogged labyrinth of cracked sunsets and fire-freshness - but developers Wrong Organ are certainly upping the ante with their new game Carcass Clad. Announced just today, ...

Code isn’t just a way to implement a design, it’s a way to find one. With an interface, you have to use it, feel it, interact with it, and poke at it to see the relationships between things. Change X, ...